Artificial intelligence is often introduced through the language of possibility. It promises smarter systems, faster decisions, and more efficient societies. In discussions about disability, these promises take on a particularly hopeful tone. AI is frequently presented as a technological breakthrough that will finally remove barriers for disabled people through automated captioning, voice assistants, navigation tools, and personalised learning platforms. In a country like India, where millions of people continue to face barriers to education, employment, healthcare, and public infrastructure, the appeal of such solutions is powerful.

But technological optimism can obscure a deeper set of questions. What assumptions about bodies, ability, and productivity are built into these systems? Whose experiences shape the data that trains them? And what happens when technologies designed to increase accessibility inherit the same social biases that have historically marginalised disabled people? Disability scholarship has long argued that technologies rarely arrive in neutral spaces; they enter societies already structured by inequalities and cultural expectations about what bodies should be able to do (Goodley, 2014; Garland-Thomson, 2011).

Across policy documents and technology reports, AI is often described as a tool that will ‘empower’ disabled people by compensating for impairment. Image recognition applications promise to help blind users navigate environments. Speech interfaces promise communication support for people with mobility or speech impairments. Educational technologies promise personalised learning for students with cognitive differences. These innovations are important, and for many disabled users they genuinely improve everyday life. At the same time, the language of empowerment can mask a deeper assumption: that disability is primarily a technical problem waiting to be solved by better design, better data, or better algorithms.

Disability studies has consistently challenged this assumption. Disability is not simply located in individual bodies; it emerges through relationships between bodies, environments, and social expectations (Goodley, 2014; Shakespeare, 2018). When buildings lack ramps, classrooms rely exclusively on written tests, or workplaces assume constant productivity, disability is produced through social arrangements rather than physical conditions alone. Technologies developed in such contexts may appear progressive while quietly reinforcing the very norms that produce exclusion. AI systems therefore enter a landscape already shaped by ideas about normality, efficiency, and value.

These questions become particularly important in India, where technological development intersects with deep social inequalities. AI systems increasingly shape everyday decisions, from hiring platforms and digital banking to government service delivery. Yet research examining algorithmic systems in the Indian context has shown that bias often emerges when models are trained on incomplete or socially skewed data (Sambasivan et al., 2021). These biases do not only affect gender or caste representation; they also shape how disability is imagined, categorised, and interpreted by machines.

Recent studies evaluating large language models and datasets in India have found that stereotypes related to disability appear in automated responses and associations within AI systems (Santhosh et al., 2025). When prompted with disability-related descriptions, models often generate narratives that frame disabled people as dependent, tragic, or socially isolated. These patterns are not surprising. Training data reflects existing cultural narratives, many of which treat disability primarily as misfortune or charity. When algorithms learn from these patterns, they reproduce them at scale, embedding them into digital infrastructures that appear objective and neutral.

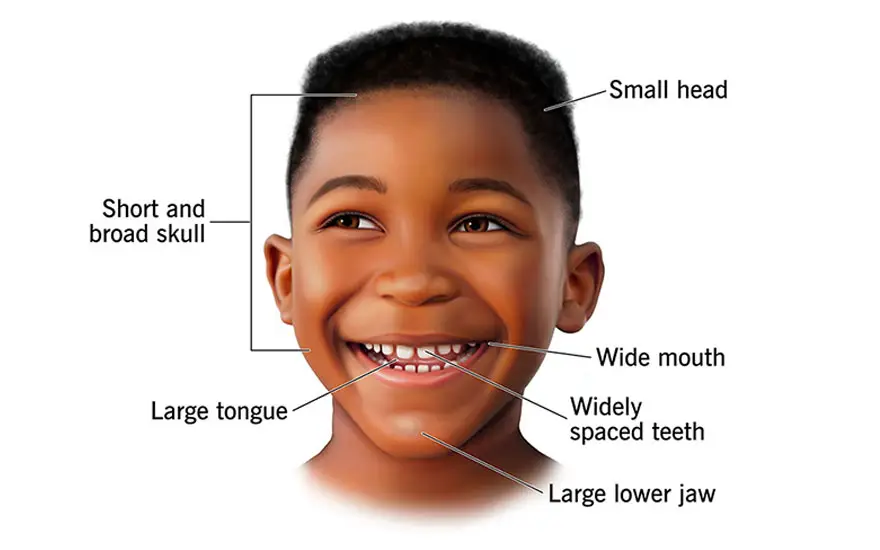

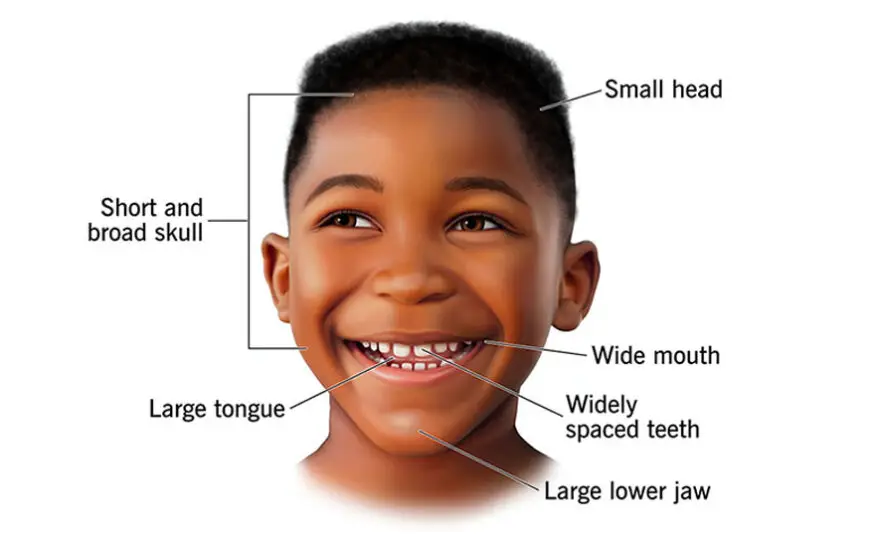

Representation also matters in visual technologies. Research examining AI image generation has shown that when systems produce images of disabled people, they frequently rely on narrow visual tropes, most commonly representing disability through wheelchairs or medicalised imagery (Tevissen, 2024). This reduction of disability to a single visual marker erases the diversity of disabled experiences. It also reinforces a cultural imagination in which disability is always visible, always exceptional, and always defined through assistive devices. The everyday reality of disability, which often involves invisible conditions, fluctuating capacities, and diverse identities, disappears from view.

In India, these representational limitations intersect with broader issues of linguistic and cultural diversity. Many AI systems are trained primarily on English-language datasets produced in Western contexts. As a result, they often struggle to interpret regional languages, cultural references, or local expressions related to disability. When technologies designed elsewhere are deployed in India without careful adaptation, they risk misrepresenting the very communities they claim to support. Bias, in this sense, is a reflection of whose knowledge and experiences are included in the data economy.

Infrastructure further complicates the promise of AI accessibility. Technologies that rely on constant connectivity, expensive devices, or advanced digital literacy may remain inaccessible to many people. In rural areas and low-income communities, disabled individuals often encounter multiple barriers simultaneously: limited internet access, inaccessible educational institutions, and weak enforcement of disability rights legislation. In such contexts, AI solutions can appear futuristic while the basic conditions of accessibility remain unresolved. Technology risks becoming a symbolic gesture towards inclusion rather than a structural transformation.

Critical research on infrastructure and accessibility reminds us that design decisions are never purely technical (Hamraie, 2017). Ramps, elevators, captioning systems, and digital interfaces all reflect choices about whose participation is expected and valued. AI systems are part of this same design landscape. When accessibility is treated as an afterthought, technologies often replicate dominant assumptions about independence, productivity, and speed. Disabled people are expected to adapt to systems rather than systems adapting to the diversity of human bodies.

The rapid growth of generative AI raises additional concerns about authorship, knowledge, and representation. Generative models increasingly shape how information is summarised, translated, and circulated. When these systems reproduce stereotypes about disability or omit disabled perspectives entirely, they subtly influence public discourse. Automated summaries, educational materials, and search results may reinforce narrow narratives about disability without users realising that these narratives originate from algorithmic patterns rather than careful scholarship.

None of this means that AI cannot contribute to accessibility. Many disabled people actively use and shape technological tools in creative ways. Captioning systems, navigation applications, and voice interfaces already support participation in education and work. The question is not whether AI should exist in disability contexts, but how it is developed and governed. A critical perspective shifts attention away from technological novelty and towards the social conditions under which technology is produced.

One emerging approach emphasises participatory design. Instead of designing technologies for disabled people, researchers increasingly argue for designing with them. Participatory design treats disabled users not as passive recipients of assistive technologies but as experts in accessibility. Their insights can reveal everyday barriers that designers might overlook, from subtle interface problems to broader social assumptions embedded in technology. When disabled communities participate in research and development, accessibility becomes a collaborative process rather than a technical fix.

Another important shift involves reframing accessibility as a collective issue rather than an individual accommodation. Disability scholarship has long emphasised that designing for disability often benefits everyone. Captions assist language learners and people in noisy environments. Voice interfaces help users with temporary injuries or limited literacy. Flexible learning technologies support diverse learning styles. When accessibility is integrated from the beginning, technologies become more adaptable and inclusive for a wide range of users (Garland-Thomson, 2011).

In the Indian context, such an approach also requires attention to intersectionality. Disability rarely exists in isolation from other social identities. Caste, gender, rural location, and economic status shape how disabled people experience technology and access public services. AI systems that ignore these intersections risk reinforcing layered inequalities. Ethical frameworks for AI therefore need to move beyond abstract principles and engage directly with the lived realities of marginalised communities.

Ultimately, the conversation about AI and disability is not only about innovation but about imagination. Technologies reflect the worlds we expect to build. If AI systems are designed within cultures that prioritise efficiency, productivity, and standardised ability, they will reproduce those priorities. If they are shaped by a broader commitment to accessibility, diversity, and social justice, they may help create more inclusive infrastructures. The challenge lies not simply in building smarter machines, but in cultivating more critical ways of thinking about technology itself.

A critical engagement with AI therefore invites a shift in perspective. Instead of asking how artificial intelligence can ‘fix’ disability, we need to ask how disability perspectives can transform artificial intelligence. Disability scholarship has long questioned dominant ideas about normality, independence, and value. When these insights inform technological design, they reveal possibilities that purely technical approaches overlook.

The future of AI will not be determined only by engineers or algorithms. It will be shaped by the social values that guide how these systems are imagined, built, and governed. If disabled people remain peripheral to that process, AI risks reproducing the same exclusions it claims to solve. But if disability perspectives are treated as central forms of knowledge, they may help reorient technological development towards a more expansive understanding of human difference. In that sense, the most transformative contribution disability can make to artificial intelligence is not simply better accessibility tools, but a fundamentally different vision of what inclusive technology might mean.

Dr. Ankita Mishra

She is a Research Associate affiliated with the Human research centre at the University of Sheffield, focusing on the “Disability Matters” project. She specializes in researching disability, social inequality, and inclusion, contributing to the university’s work in the social sciences and humanities.

References

Garland-Thomson, R. (2011) ‘Misfits: A feminist materialist disability concept’, Hypatia, 26(3), pp. 591–609. https://doi.org/10.1111/j.1527-2001.2011.01206.x

Goodley, D. (2014) Dis/ability Studies: Theorising Disablism and Ableism. London: Routledge.

Hamraie, A. (2017) Building Access: Universal Design and the Politics of Disability. Minneapolis: University of Minnesota Press.

Sambasivan, N., Kapania, S., Highfill, H., Akrong, D., Paritosh, P. and Aroyo, L. (2021) ‘“Everyone wants to do the model work, not the data work”: Data cascades in high-stakes AI’, Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems. New York: ACM. https://doi.org/10.1145/3411764.3445518

Santhosh, G. S. et al. (2025) ‘IndiCASA: Evaluating social bias in large language models in India’, arXiv preprint. Available at: https://arxiv.org/

Shakespeare, T. (2018) Disability: The Basics. London: Routledge.

Tevissen, Y. (2024) ‘Disability representations in automatic image generation systems’, arXiv preprint. Available at: https://arxiv.org/